ACGT <-> ACDEFGHIKLMNPQRSTVWY <-> ABCDEFG

|

|

|

Proteomusic, representing twisted music inspired by genomes and proteomes, is the third effort following TWISTED HELICES' first two albums, Traversing a Twisted Path and Twisting in the Wind. Even though I've said that my goal is to make self indulgent music, in this particular case, this is purely a coding and pseudoscientific exercise based on the research I've been doing on protein structure since 1993 (both scientific and musical). Here, I am transforming three dimensional protein structures into musical events. In other words, the structure of a protein completely determines the initial output for a particular song. The software is available as part of the RAMP distribution for modelling protein structure) and the Protinfo web server contains an interface to specify your own PDB identifier or conformation file which is in progress. This project is inspired by my first loves.

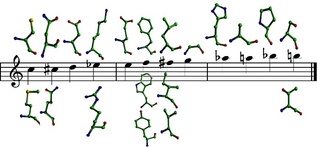

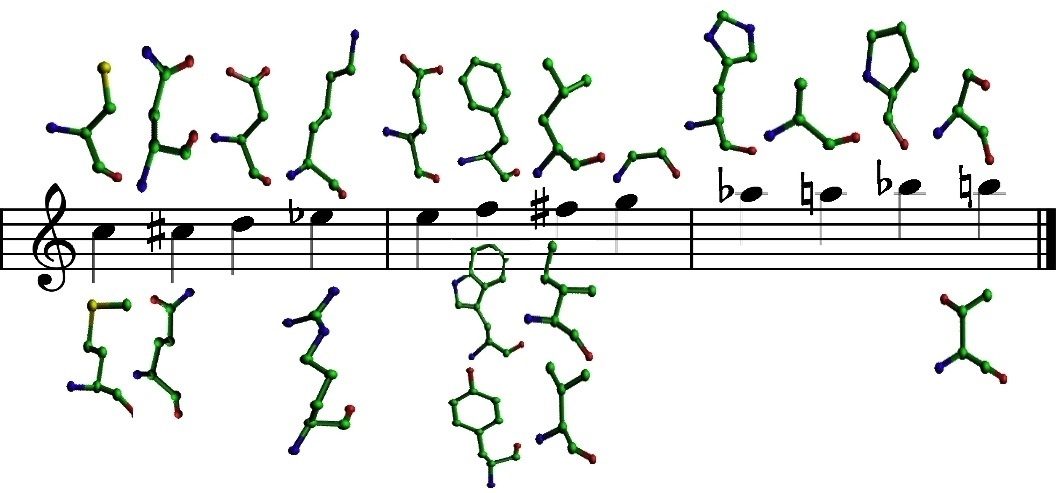

The beauty of what we're doing isn't just making and playing programmatic music but that we're giving people the opportunity who cannot visually see protein structures hear it in exquisite detail (which may be further improved by the manipulation of the MIDI files provided, or by playing with the structure_to_music program in the RAMP software suite under "music related programs" (without necessarily needing to recompile). The rough version of the patterns (codes) I've used is available and visually depicted below.

The program takes protein structure (PDB) and secondary structure (DSSP) files as input and generates a file that is used to by the program midge to produce a MIDI file. It sequentially examines each residue and assigns notes depending on the scale used (for the track translation, it is the default assignments for melodies, bass, and bends are in C major and the chords, and tuplets consist of all 12 notes/pitches in the chromatic scale). The beat/tempo and percussion are chosen by the secondary structure (helix - fastest; strand - slowest; coil - middle). Some of the clever considerations are the choices of notes and percussion, the consideration of interacting residues and atoms, the attack and decay rates based on potential functions for protein structure prediction, the effects used, and the time signature. A song generated thus could be considered to be an aural representation of a protein structure, much as ribbons and colours are used to present visual representations, and may enable blind people to "hear" protein structures (I do this all time now---I can recognise protein structures by ear as well as by my one eye, which is fantastic since I can't see in stereo but I can hear in it).

There is some creativity involved in making the "right" choices so that the transformation sounds "decent" (just like molecular graphics tools have to make creative choices about rendering proteins visually). I was pleasantly surprised when I first started doing this as to how good it all sounded. There is a biological rationale behind the default chemical logic use that explains this (see below). It also uses nonlocal interactions and pseudoenergies generated my all atom function and for the multilayered chords, triplets, and bends, so it actually isn't as dissonant as it could be. If you listen carefully, you can definitely "hear" the protein's structure. Over several hours of listening (again, much like visualisation), I've become good at telling grossly correct from incorrect structures (decoys), simple from complex topologies, and structural relatedness. At first listen, for many people, the wall of sound might be too complex to deconvolute in their heads and relate it to protein structure (which is definitely doable). I will release compositions based on track by track build up of the sounds in the near future. For now, you can download the MIDI file representing the composition and create and listen to your own (minimalist and varied) mixes.

Proteomusic by TWISTED HELICES is an evolving project, where I will include songs and perturbations not only based on an individual gene or protein's structure, but within and across genomes and proteomes. This, tracks based on protein interactions, whole proteomes, comparative proteomes, evolution, designed proteins are in progress. In fact, using a Linux-based DJ mixer, and using program via the command line, one can make tracks of thousands of proteins from different organisms and mix them together to create a "supertrack". The initial set of compositions (album) will involve programmatic transformations of interesting structures combinations; later I will focus on adding human instruments manually to complement the machine generated tracks. Further, considering DNA, RNA, and small molecules will definitely happen as our own research grows in these areas.

The Proteomusic web module as part of the Protinfo server. The goal here is to take any PDB file or identifier as input and generate a .mid (MIDI) file at the very least via a CGI interface. At this point, only direct General MIDI transformations of protein structures are produced by the software but the MIDI files may resequenced and guitars and other instruments can be "manually" added.

This project also marks my complete move away from Microsoft based software to do any music related work. I now use all Linux software. Specifically for this album, I used the programs midge (transforms music generated by the structures into MIDI files), timidity (software synthesiser), rosegarden (sequencer), and audacity (audio recording and production). I also have used a powerful Apple computer (along with Logic) to control my studio equipment used to make the sounds.

As usual, all the music in the album can be gotten online without any restriction, and I encourage (commercial and personal) copying and distribution of the album, in accordance with the Free Music Philosophy.

The final aspect I need to point out is that what we need are extremely creative pictoral/visual representations (better than what we see in standard protein visualisation software) that matches the music being played. In other words, as the music proceeds through the sequence and secondary structure and interacts with various residues and atoms, the should be depicted that I hope will form the really cool music videos for these songs. This requires a bit of programming (but is doable with existing visual tools) and hopefully will be done one day.

Once I got over the desire to belong in traditional pop/rock/metal/punk/etc. settings, I decided to make music for music's sake. What that means is a deep question and a pretty subjective and personal one at that.

I want, perhaps need, to go beyond what people call "music". In that sense I'm influenced by the thinking of people like John Cage. Cage is alleged to have said that the sounds he heard when he sat by the window was much better than anything he heard on the radio. I can totally relate to that way of thinking and all the music I create is done with such an inspiration in mind, that the world around us is full of music and you just have to listen.

Cage is also the author of the famous 4'33'' piece. The entire piece is empty and doesn't contain any musical notes to be played and the pianist (and other instrumental players) don't do anything for 4 minutes and 33 seconds. The idea was that the audience would listen to its own ambient sounds, like shuffling feet, coughing and sneezing, clearing throats, scratches, and so on. It was radical when it was first done and works only for the person who does it first.

Ultimately, John Cage is influential simply because we're convergent on the same notions of "aleatoric" music, which refers to elements of composition left to chance. That describes not only my life story but how all of life works (biology). I believe Proteomusic achieves that goal. It's a shame, that like Cage's music, it's so inaccessible to people since they're all in a box and find it hard to escape. (Not to insult you or anything like that.)

Science and music are both my addictions. I just make more guaranteed money doing the first. But as a graduate student, I made more money from my music (my first CD sold over 6000 copies) than my stipend. But society rewards one more than the other and in the end, while music invigorates my soul, it doesn't directly cure disease (yet, though this project can make that happen).

Initially, the plan was to use a Yamaha Clavinova CVP 305, which is a really high end digital piano/synthesiser released in 2004. It really has some fantastic nondrum (i.e., synthesiser) sounds that the Yamaha DTX 900 drum machine/sequencer simply cannot match. This is noticeable even with compression which makes the Clavinova sound better (it depends on whether you're used to listening to your music "loud"---this is the way most songs on radio are presented, since they have fixed volume limits so they compress the hell out of the songs and reduce the dynamic range which is largely nonexistant in rock/pop music anyway).

On the other hand, the DTX 900 drum sounds are simply not comparable to any other synthesiser I'd heard as of 2013.

So the real answer then is to do the tracks so that the drums come from the DTX and the keyboards come from the Clavinova (sigh, synchronisation is nontrivial). But I feel I'm going to gradually evolve the album from the standard C major scale and the standard kit from the Clavinova to different scales and different sounds from both the Clavinova and the DTX 900 as I change the PDB structures (I still have a bunch of ideas to go through, including transition, evolution, mutation, variation, etc.). With this goal, the first two tracks, translation and twisted helices are recorded 100% on the Clavinova and the next track design is recorded using the Yahama DTX 900 only (but with the Sci Fi kit). Future versions should see both these awesome sound sources fully integrated and also a lot of musical plan in terms of scales and time signatures.

I did this on the iMac (my first recording on it) using Logic Express to process the MIDI file from structure_to_music, and then I use sox (a Unix/Linux tool) to record the input and process it and lame for mp3 encoding (the latter two tools are command line ones---goes to show much free software is crucial to doing this efficiently using my famous shell scripting style :).

structure_to_music now can specify any bank/variation (MSB/LSB) for all the nondrum sounds (doing so for the drum kit is just a few more characters of code but with the DTX 900 I can just move the dial and change kits so it's easier to not specify it). This will let me explore the Clavinova soundscapes better from the command line, something I've had difficulty doing in the past.

There are other applications to this approach of creating context sensitive music. One label for the research we do is "interactomics". We can refer to an atomic level interaction, an organismal (population) level interaction, and everything in between (atoms, molecules, pathways, cells, tissues, organs, individuals, populations). >What makes this protein music unique (especially at the time it was conceived (way back in the mid 1990s) and created (2010+), is that it takes into account the context sensitivity of atoms and residues present in single protein chains. This work could be extended to hearing other interacting complex dynamical systems, including (as just a few possible examples) neurons in the brain (aka "the connectome"), traffic patterns in populated cities, and stellar dynamics and cosmic motion.

Our publication list contains more information on a lot of the technical work done, including the development of an all atom knowledge-based statistical potential of mean force, design of drug-like peptides (which are small pieces of proteins), and so on.